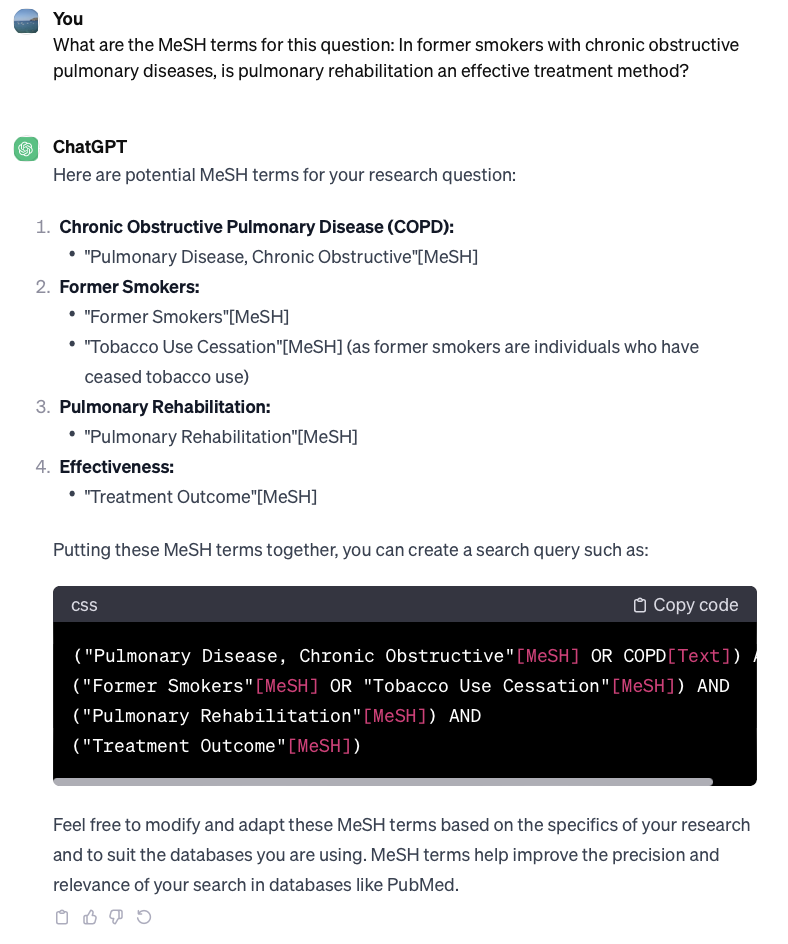

In an era where artificial intelligence tools are becoming increasingly integrated into our research and daily workflows, it’s important to remember that even the most sophisticated AI systems can make mistakes, sometimes with information that is easily verifiable. Let’s use a very Montreal example: hockey.

Simple Question, Contradictory Answers

Recently, I conducted a simple experiment with Perplexity, an AI tool that markets itself as a one-stop shop for research and general questions. Their interface features dedicated tabs for Business, Arts & Culture, Tech & Science, Entertainment, and Sports, and their marketing emails actively encourage users to rely on it for everyday task, including looking up sports statistics like “NBA scores from last night.”

I asked a straightforward question: “Who has the most overtime goals in the NHL in the 2025-2026 season as of right now.” Note: this question was asked on January 21st 2026.

The AI’s initial response seemed confident and specific. After reviewing 10 sources, it told me that as of January 21st, 2026, there was a six-way tie for the league lead, with players like J.T. Miller, Steven Stamkos, and Adrian Kempe each having 3 overtime goals.

Fair enough. Six players tied at the top. A reasonable answer for an in-season statistic question.

But then I asked a follow-up question. Because I’m a Montreal Canadiens fan, and something wasn’t adding up.

“How many overtime goals does Cole Caufield have this season?”

After reviewing the same 10 sources, the AI confidently replied that Cole Caufield had 4 overtime goals in the 2025-2026 NHL season.

Wait a minute. If six players were tied for the lead with 3 goals each, how could Cole Caufield have 4 overtime goals and not be mentioned as the leader?

When I pointed out this logical contradiction, the AI had no choice but to acknowledge its error. It confirmed that yes, if Caufield had 4 overtime goals and no other player had more than 3, then he would indeed be the number one overtime goal scorer for the season, contradicting its original answer entirely.

What This Teaches Us

This simple example illustrates several critical lessons for students, researchers, and anyone using AI tools.

1. AI Tools Make Mistakes Even With Verifiable Facts

This wasn’t a matter of opinion or interpretation. Overtime goals in the NHL are publicly tracked statistics that should be straightforward to verify. Yet the AI provided contradictory information within the same conversation.

2. Confidence Doesn’t Equal Accuracy

The AI presented both answers with equal confidence, citing sources each time. A confident tone and reference to sources doesn’t guarantee correctness.

3. Critical Thinking is Your Best Tool

The error only became apparent when I asked a follow-up question and noticed the logical reasoning inconsistency. Passive acceptance of the first answer would have left me with incorrect information.

4. Always Verify Important Information

Admittedly, this particular error had low stakes. No one’s getting hurt if I mistakenly credit the wrong player with the overtime goal lead. But the same AI tool that fails on sports statistics could just as easily provide incorrect information about medication dosages, historical dates for your research paper, or citation requirements for your thesis. If you’re relying on AI-generated information for academic work, professional decisions, or anything where accuracy matters, always verify the facts through authoritative primary sources.

In this case, the NHL’s official statistics page would have provided the correct answer in seconds. No contradictions, no confusion, just reliable data from the source.

Which brings me to my next point…

5. Some Questions Don’t Need AI

Why ask an AI for NHL overtime goal leaders when you could check NHL.com’s official statistics in seconds? For straightforward, easily verifiable facts (sports scores, current weather, stock prices, published statistics, etc.) going directly to the authoritative source is often faster, more reliable, and more appropriate than routing the question through an AI tool.

6. AI is a Tool, Not an Authority

Generative AI tools are powerful assistants for brainstorming, drafting, and exploring ideas, but they should not be treated as authoritative sources of factual information without verification.

Best Practices When Using AI

As you integrate AI tools into your academic work, keep these principles in mind:

- Cross-reference important facts with authoritative sources

- Question contradictions when you notice them

- Maintain healthy skepticism, even with correct-sounding answers

- Use AI as a starting point, not an endpoint, for research

- Cite primary sources, not AI-generated summaries

The Bottom Line

Artificial intelligence is a remarkable technology that can enhance productivity and learning, but it’s not perfect. The responsibility for accuracy still rests with you, the human user. Think of AI as a well-meaning but occasionally confused assistant rather than an all-knowing oracle.

The next time AI gives you an answer, ask yourself: Does this make sense? Can I verify this? What would happen if this information were wrong? Your critical thinking skills are more valuable than ever in the age of AI.

Have you encountered AI making verifiable errors? Share your experiences in the comments below. And remember: The McGill Libraries team is available to help you find authoritative sources for your academic work.

/2025/Communications/Graphics/Digital%20screens/QHM%202025_Keynote_16x9.png?e=4%3ae64dcd6cd92b42c5a133de7f5ae1b824&web=1&sharingv2=true&fromShare=true&at=9&CID=8a881348-288f-4002-8eab-5d876ce80e82)